Making Sense of Amazon Redshift Pricing

Considering building a data warehouse in Amazon Redshift? It’s a great option, even in an increasingly crowded market of cloud data warehouse platforms. If you’re new to Redshift one of the first challenges you’ll be up against is understanding how much it’s all going to cost. Amazon Web Services (AWS) is known for its plethora of pricing options, and Redshift in particular has a complex pricing structure. Let’s dive into how Redshift is priced, and what decisions you’ll need to make.

On-Demand vs. Reserved Instances

There are two ways you can pay for a Redshift cluster: On-demand or reserved instances. This choice has nothing to do with the technical aspects of your cluster, it’s all about how and when you pay.

When you pay for a Redshift cluster on demand, you for each hour your cluster is running each month. When you choose this option you don’t pay anything up front. For most production use cases however, your cluster will be running 24×7, so it’s best to price out what it would cost to run it for about 720 hours per month (30 days x 24 hours). Why? Well, it’s actually a bit of work to snapshot your cluster, delete it and then restore from the snapshot. That said, it’s nice to be able to spin up a new cluster for development or testing and only pay for the hours you need.

Reserved instances are much different. When you choose this option you’re committing to either a 1 or 3-year term. In addition, you can choose how much you pay upfront for the term:

- No upfront payment

- Partial upfront payment

- Full upfront payment

The longer your term, and the more you pay upfront, the more you’ll save compared to paying on-demand. As of the publication of this post, the maximum you can save is 75% vs. an identical cluster on-demand (3 year term, all up front).

Which option should you choose? It depends on how sure you are about your future with Redshift and how much cash you’re willing to spend upfront. I typically advise clients to start on-demand and after a few months see how they’re feeling about Redshift. At that point, take on at least a 1 year term and pay all upfront if you can. The savings are significant.

It’s also worth noting that even if you decide to pay for a cluster with reserved instance pricing, you’ll still have the option to create additional clusters and pay on-demand. Such an approach is often used for development and testing where subsequent clusters do not need to be run most of the time.

Dense Compute vs. Dense Storage vs. RA3 Nodes

The first technical decision you’ll need to make is choosing a node type. A Redshift data warehouse is a collection of computing resources called nodes, which are grouped into a cluster. There are three node types, dense compute (DC), dense storage (DS) and RA3.

Dense compute nodes are optimized for processing data but are limited in how much data they can store. A good rule of thumb is that if you have less than 500 GB of data it’s best to choose dense compute. In that case, not only will you get faster queries but you’ll also save between 25% and 60% vs a similar cluster with dense storage nodes.

As you probably guessed, dense storage nodes are optimized for warehouses with a lot more data. More than 500 GB based on our rule of thumb. For lower data volumes, dense storage doesn’t make much sense as you’ll pay more and drop from faster SSD (solid state) storage on dense compute nodes to the HDD (hard disk drive) storage used in dense storage nodes.

RA3 nodes are the newest node type introduced in December 2019. These nodes enable you to scale and pay for compute and storage independently allowing you to size your cluster based only on your compute needs.

Which option should you choose? Choose based on how much data you have now, or what you expect to have in the next 1 or 3 years if you choose to pay for a reserved instance. If 500GB sounds like more data than you’ll have within your desired time frame, choose dense compute.

The introduction of RA3 nodes makes the decision a little more complicated in cases where your data volume is, or will soon be, on the high end. These nodes only come in one size, xlarge (see Node Size below) and have 64TB of storage per node! With a minimum cluster size (see Number of Nodes below) of 2 nodes for RA3, that’s 128TB of storage minimum. In most cases, this means that you’ll only need to add more nodes when you need more compute rather than to add storage to a cluster.

Node Size

Once you’ve chosen your node type, it’s time to choose your node size. This is simply how powerful the node is. Specifically, it determines:

- How many virtual CPUs the node has

- How many Amazon EC2 units the node has

- The amount of RAM available to the node

- The storage capacity of the node

- The I/O (Input/Output) speed of the node

There are two node sizes – large and extra large (known as xlarge). When you combine the choices of node type and size you end up with 4 options. Note that the current generation of Redshift nodes as of this publication is generation 2 (hence dc2 and ds2).

- dc2.large (dense compute, large size)

- dc2.8xlarge (dense compute, extra large size)

- ds2.large (dense storage, large size)

- ds2.8xlarge (dense storage, extra large size)

- ra3.16xlarge (RA3, extra large)

Which one do I choose? You’ve already chosen your node type, so you have two choices here. When you’re getting started, it’s best to start small and experiment. XL nodes are about 8 times more expensive than large nodes, so unless you need the resources go with large. Before you lock into a reserved instance, experiment and find your limits.

When it comes to RA3 nodes, there’s only one choice, xlarge so at least that decision is easy!

Number of Nodes

As noted above, a Redshift cluster is made up of nodes. In addition to choosing node type and size, you need to select the number of nodes in your cluster.

If you choose “large” nodes of either type, you can create a cluster with a between 1 and 32 nodes. For “xlarge” nodes, you need at least 2 nodes but can go up to 128 nodes.

How many nodes should I choose? Sizing your cluster all depends on how much data you have, and how many computing resources you need. At this point it becomes a math problem as well as a technical one. There are benefits to distributing data and queries across many nodes, as well as node size and type (note: you can’t mix node types. It’s either dense compute or dense storage per cluster). When you’re starting out, or if you have a relatively small dataset you’ll likely only have one or two nodes. A common starting point is a single node, dense compute cluster. Beyond that, cluster sizing is a complex technical topic of its own.

Don’t Forget about Regions

One final decision you’ll need to make is which AWS region you’d like your Redshift cluster hosted in. Believe it or not, the region you pick will impact the price you pay per node. In other words, the same node size and type will cost you more in some regions than in others.

Which one should I choose? Price is one factor, but you’ll also want to consider where the data you’ll be loading into the cluster is located (see Other Costs below), where resources accessing the cluster are located, and any client or legal concerns you might have regarding which countries your data can reside in. Choosing a region is very much a case-by-case process, but don’t be surprised by the price disparities!

Other Costs

In addition to choosing how you pay (on demand vs. reserved), node type, node size, cluster size and region you’ll also need to consider a few more costs. All of these are less likely to impact you if you have a small scale warehouse or are early in your development process. It’s good to keep them in mind when budgeting however.

- Concurrency scaling

- Backup Storage

- Data Transfer

- Additional AWS Resources

Concurrency scaling is how Redshift adds and removes capacity automatically to deal with the fact that your warehouse may experience inconsistent usage patterns through the day. This is an optional feature, and may or may not add additional cost. Learn more about it here.

Backup Storage is used to store snapshots of your cluster. The amount of space backups eat up depend on how much data you have, how often you snapshot your cluster, and how long you retain the backups. You get a certain amount of space for your backups included based on the size of your cluster. Additional backup space will be billed to you at standard S3 rates. See the Redshift pricing page for backup storage details. I find that the included backup space is often sufficient.

Data transfer costs depend on how much data you’re transferring into and out of your cluster, how often, and from where. The good news is that if you’re loading data in from the same AWS region (and transferring out within the region), it won’t cost you a thing. Again, check the Redshift pricing page for the latest rates.

Finally, if you’re running a Redshift cluster you’re likely using some other AWS resources to complete your data warehouse infrastructure. S3 storage, Ec2 nodes for data processing, AWS Glue for ETL, etc. Again, these costs are dependent on your situation, but in most cases they’re quite small in comparison to the cost of your cluster.

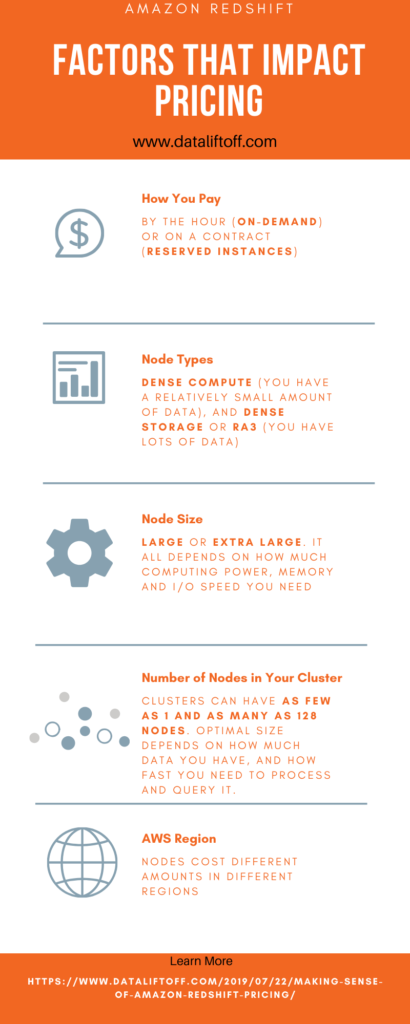

Cheat Sheet and Summary

With all that in mind, determining how much you’ll pay for your Redshift cluster comes down to the following factors:

- How you want to pay: by the hour (on-demand) or on a contract (reserved instances)

- Node types: dense compute (you have a relatively small amount of data), or dense storage (you have lots of data)

- Node size: large or extra large. It all depends on how much computing power, memory and I/O speed you need.

- Number of nodes in your cluster: Clusters can have as few as 1 and as many as 128 nodes. Optimal size depends on how much data you have, and how fast you need to process and query it.

- The region you choose to host your cluster in: Nodes cost different amounts in different regions

- Additional costs: Depending on how your architect your data pipelines and processing, you might need additional storage, compute or ETL services from AWS.

Amazon is always adjusting the price of AWS resources. Now that you understand how Redshift pricing is structured, you can check the current rates on the Redshift pricing page.

Need help planning for or building out your Redshift data warehouse? Learn more about me and what services I offer.